A/B Testing: 4 Common Mistakes Ecommerce Retailers Make6 min read

Table of Contents

eCommerce retailers prefer A/B testing because it takes the guesswork out of site planning, strategies, and experiments, providing them with proven data that they can use to make better decisions. With A/B testing, retailers can discover what action on the website can lead to the desired outcome — it is a method of discovering what design, content, or functionality is more successful with the site visitors. It allows retailers to test and tweak pages or a particular element on a page to determine what positively affects consumer behavior.

What A/B Testing Really Means

This is a proven method that helps retailers avoid expensive mistakes. Effective A/B testing ideally involves:

- Repeatedly testing improvements until the best possible version is achieved – each test is built based on the results of the previous tests, meaning, the overall website can be improved bit by bit, to resonate with the customer’s interests and meet business goals.

- Running experiments and measuring data to identify cause and effect – retailers can test the hypothesis in small, controlled environments, to identify winning strategies before scaling those efforts.

For example, let’s assume an eCommerce manager working for a fashion brand wants to identify the right recommendation module that aids conversions.

In order to do that, they can try A/B testing two different types of recommendation modules to see which module works the best. The manager can do this by exposing two user groups to journeys with these modules with keeping everything else constant. This will help gauge the impact of the modules and compare the performance of one against the other.

At the same time, many retailers and marketers tend to make a few mistakes when it comes to A/B testing that can prove detrimental to their business.

We have listed the top 4 mistakes that retailers should look out for:

1. Testing Without Goals or Hypothesis

The first step in conducting an A/B test is to identify the business problem that needs to be solved and the quantifiable metric/KPI(click-through rate, conversion rate, etc.) that will be used to ascertain if the goals of the experiment are met.

A testing hypothesis is a statement that clearly states what changes need to be made, why they must be made, and its expected impact.

Once a goal is identified, retailers can begin generating A/B testing ideas and hypotheses to create customer journey variations. The three components that make a strong hypothesis are:

- Defining a problem

- Describing a proposed solution

- Setting criteria for success/failure and using metrics to measure results

2. Testing Too Many Factors At One Go

It’s very possible to test too many variables at once. Whenever retailers test too many variables at the same time, they won’t know what’s affecting conversions and may miss out on an improvement.

With solid research and a hypothesis, retailers can change multiple elements in the same test. For example, they can introduce a whole new layout for their product page, where most of the page’s elements are changed.

3. Target Audience: No Clarity, No Segmentation

When testing, retailers should always keep their target audience in mind and design experiments FOR them. They are the ones who validate what works or doesn’t work on the website. To truly understand the audience, retailers should create shopper personas and map their understanding of them to the shopper behavior on the site to identify possible tests that can be run.

When it comes to A/B tests, the more control retailers have, the better. Whenever retailers do any sort of analysis on the website, whether for split tests or not, they need to understand and consider segments.

The most common segments that e-commerce teams use during A/B testing are Returning visitors, New visitors, Visitors divided by countries, Visitors divided by traffic sources, Different days, Different devices, Different browsers.

The important thing to know about segments is that visitors from two different segments cannot be compared.

For example, let’s assume on a particular day, a website has 100 new visitors as well as 100 returning visitors. How they browse, how long they stay on the site, how much they purchase for, etc., will vary from one segment to another.

4. Comparing Different Days’ Results

The regular traffic on a website will vastly differ from holiday traffic. Even a single day of holiday traffic can affect the test results which can lead to retailers optimizing their website for people who visit the site only during the holidays.

Retailers should avoid these days’ data as they might skew the test results or make sure they run tests for a longer period of time if these days fall within the test duration.

Conclusion

The primary goal of A/B testing, more than revenue or engagement, is to derive as many insights as possible. Leveraging these insights can help retailers understand different aspects of customer behavior.

It is important to analyze performance metrics to get a comprehensive understanding of what worked and what did not.

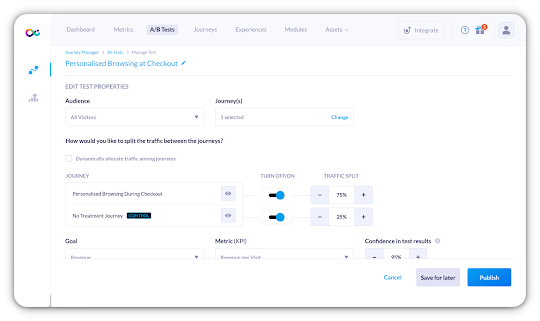

Vue.ai’s personalization engine allows retailers to create personalized eCommerce shopper journeys and A/B test them to optimize for winning ones. Vue.ai’s personalization engine provides in-built analytics to track all relevant metrics that enable you to understand the performance of different customer journeys.

There is no single journey that can fit all customers. Testing multiple customer journeys allows you to gain key insights into customer behavior and identify the best-suited journey for the audience.

The idea is to continuously experiment with different combinations of experiences with highly customized content across different audiences to maximize the retailers’ business goals. Vue.ai supports a frequentist approach to A/B testing where the Vue.ai user is completely in control of executing/monitoring and concluding A/B tests.

Related Articles:

Retailer’s Guide: How A/B Testing Can Increase AOV By 16.5%

6 Best Practices To Follow While A/B Testing For eCommerce

How Brands Can Use AI-Enabled Segmentation To Understand Shoppers Better

Read More about Virtual dressing room